29 March 2024

Request your demo of the Sigrid® | Software Assurance Platform:

3 min read

Written by: Rob van der Veer

What sort of problems are we talking about here? Consider sports apps, for example, sharing your location with advertisers – even if you’re not exercising. Or take Ashley Madison, the American site for extra-marital dating. Their security wasn’t up to scratch, leading to leaking all customer data – despite their various certificates (such as ‘100% secure’). Their biggest mistake was not so much poor security, but storing address data that was no longer needed and the unnecessary registration of IP addresses. This meant the whole world could see who had committed adultery, and even who had done so while at work. An unpleasant situation, with many consequences.

“The smallest decision made by a programmer can lead to the biggest privacy issues.”

One source of hope for the protection of our personal data is the renewed attention to privacy following the European General Data Protection Regulation (GDPR) legislation, which makes organisations accountable for proper handling of personal data. This includes collecting only necessary personal information, obtaining consent for data usage, deleting data whenever possible, not sending it to others, encrypting where necessary, and ensuring systems cannot be hacked or otherwise leak data.

If you are responsible for ensuring all the correct organisational and technical measures have been taken, how do you determine if privacy principles have been built into IT processes, and where additional measures are necessary?

Unfortunately, it’s difficult to see exactly what happens to personal data on the outside of information systems or in documentation. Systems are often complex, dynamic, or outdated, and in the worst cases a combination thereof. On top of that, the devil is in the details. The smallest decision made by a programmer can lead to the biggest privacy issues: store this data, send it over there, request that GPS location, etc.

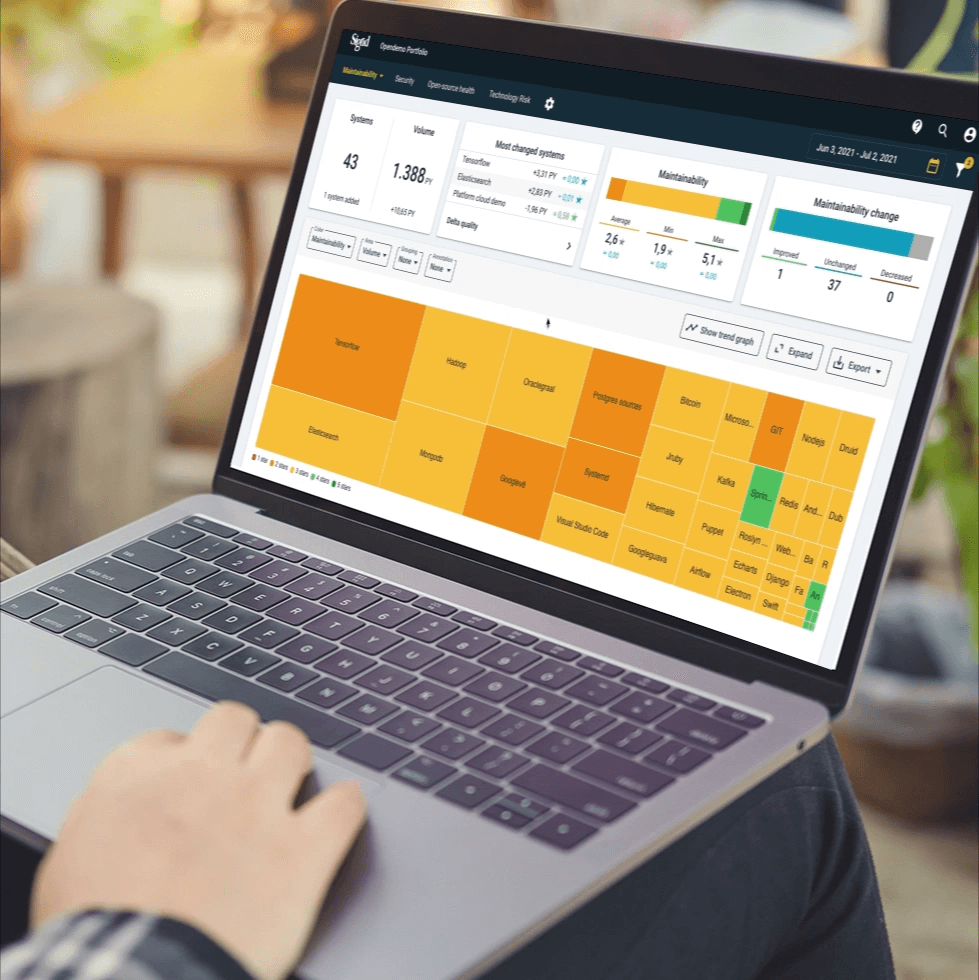

If you want to know what really happens to data in IT, have a good look at the source code. It contains all the decisions made by the programmer, and therefore allows to discover and remedy violations of privacy principles to comply with the law. It also saves time and money when software is no longer a ‘black box’, because certain organisational and technical measures, such as encryption, are not always necessary.

Organisations are now busy creating GDPR administrations, often based on assumptions regarding what happens in their information systems. They are reluctant to going deeper into IT, because there is so much to investigate. Where should you start? Good news: you can get started immediately with a risk-based approach, by first examining the software system with the highest risk. Which system that is depends on the type of application, technology used, which personal data is processed, etc. This initial look provides much insight into the organisation’s typical challenges, and whether other systems or components require further examination until the risk level is acceptable.

The GDPR describes a data protection impact assessment as a means of estimating the risks in data processing. It is important to note that this is not a compliance check, but a risk assessment, which helps determine which systems require further examination.

“Taking privacy into consideration when handling personal data is very new to software builders.”

The GDPR requires privacy principles to be applied in existing systems and incorporated into new ones. However, it turns out the correct method for handling personal data is new to software builders. Used to seeing data as the new gold, they have to say goodbye to learned principles, such as storing as much data as possible for as long as possible “because that’s useful for marketing, for example”.

At software engineer meetings, we often ask whether those in attendance can find the security problems in an example piece of code. We call this “Find the flaw”. Usually, between 20% and 80% of problems are found, but when presented with a privacy-example only 0-40% are found. Developers appear to have a blind spot when it comes to handling personal data responsibly. Typically, they regard this as an issue for legal experts to deal with. While it is true that legal experts sometimes are involved, the developer has an important role to recognise when it’s time to ask: “is this allowed?”

The results of a source code examination for privacy issues can be used to increase awareness and insight, and to instruct developers on when to pay special attention.

Developers should treat personal data as if it’s radioactive gold: it’s valuable, but be careful how you handle it, where you leave it and keep it to a minimum.

We sometimes notice initial resistance against the idea of privacy, because it’s seen as standing in the way of data-driven working. But developer’s enthusiasm increases when we show them techniques for taking advantage data of while still safeguarding privacy. Think of smart aggregation, pseudonymisation/anonymisation, separate storage, etc. With the right knowledge, it’s often possible to minimise concessions.

To remove and prevent unpleasant privacy surprises in IT, it is necessary to investigate what happens with data in the source code and take appropriate action. The risk of fines, liability, loss of customers, and damage to reputation are drastically reduced as a result. It also improves insight into the proper instructing of developers and targeted investments in appropriate measures. This way, competing on privacy becomes a possibility. What you don’t collect, and what you protect well, cannot be leaked. Privacy has grown from a nice to have into an absolute requirement, and professional organisations can set a good example here, if only to prevent them from being used as an example of how not to do things.

Author:

Senior Director, Security & Privacy and AI

We'll keep you posted on the latest news, events, and publications.