29 March 2024

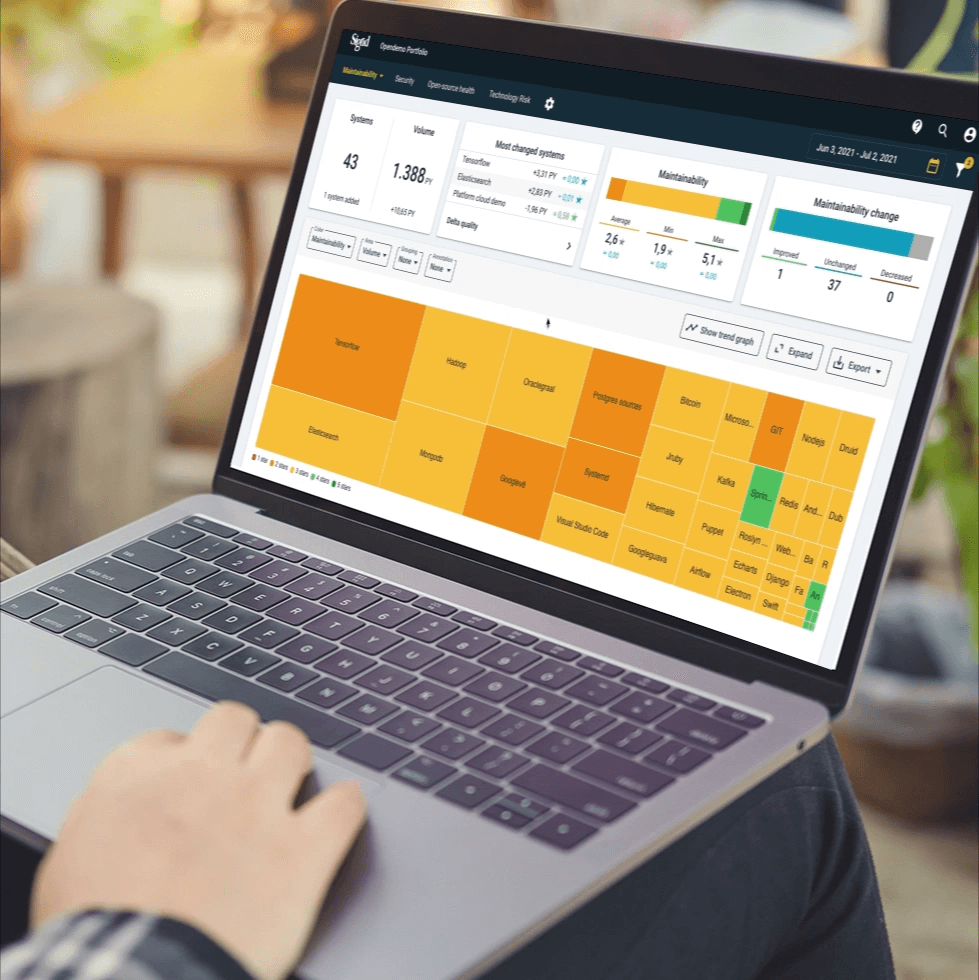

Request your demo of the Sigrid® | Software Assurance Platform:

2 min read

Written by: Rob van der Veer

Back in the ‘90s, I was an engineer at pioneering data mining company Sentient Machine Research. For our implementations, 90% of the work went into collecting and preparing data. Now 25 years later, baggy jeans are back in style, and data mining is called AI, but the work is still quite similar. Even though AI algorithms learn from example data, they still require a substantial amount of software engineering to make them work – especially when it comes to data preparation. This is a challenge because data scientists are managed and trained as analytical explorers, not as software engineers, per se. Their drive is to create insights and models quickly; not to build something that is reusable, easy to change, and testable. The big technical hurdles used to be accuracy and scalability of AI, but now that those have been largely addressed, the next hurdle is change. Artificial Intelligence built on quicksand can’t keep up with an ever-changing world.

At Software Improvement Group, we analyze the quality of data preparation code on a daily basis and often conclude it needs to be completely rewritten from scratch in order to become testable and future-proof. We see long slabs of code with much duplication, little structure, no layers, and hardly any test code. When data scientists write this kind of code in the morning, their productivity is hindered by afternoon. This can get out of hand, bringing AI initiatives to a screeching halt.

A good solution is to train data scientists in writing quality code and using the many programming mechanisms that are available in today’s platforms. In order to support them, the quality of their code can be measured. This is easier said than done because great data scientists are hard to find, especially if they need to be great software engineers as well. Thankfully, there is also a solution for that: lower the bar by having moderate quality requirements for experimental code – which is a large part of their work. Once it becomes clear that code is going to be used for a longer time, the quality level requirement goes up and software engineers can then help refactor it to become testable and future-proof. This will save a great deal of time; time we can spend on figuring out how to control AI once it outsmarts us in a couple of decades.

References:

Hidden technical debt in machine learning systems by Sculley et al. – Google

Software engineering for machine learning: A case study by Amershi et al. – Microsoft

Author:

Senior Director, Security & Privacy and AI

We'll keep you posted on the latest news, events, and publications.