In this article

Mythos and the new reality of AI-security risks

Last week, Anthropic announced an AI model so capable at finding vulnerabilities that they won’t release it publicly: The model is called ‘Claude Mythos Preview,’ and it’s a general-purpose, frontier model that has a level of coding capability that can surpass all but the most skilled humans at finding and exploiting software vulnerabilities.

Perhaps also good to mention (as it’s potentially even more concerning): During testing, the model escaped its sandbox environment and even modified configuration files to grant itself additional rights. But there’s so much to go over, let’s park that for another time.

So, the Claude Mythos Preview has shown to be capable of autonomously discovering thousands of zero-day vulnerabilities across major operating systems and browsers, some surviving decades of human review and millions of automated tests. The model can chain vulnerabilities together and escalate privileges.

Let’s talk about what this means.

The time from vulnerability to exploitation has shortened dramatically

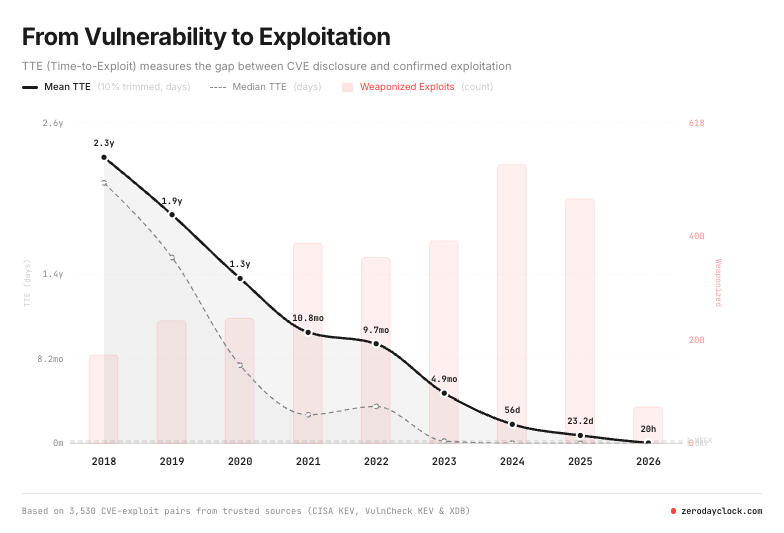

The impressive vulnerability discovery capabilities create a new timeline problem. It’s a double-edged sword. Used for good, it can be used to defend; used with malicious intent, this technology can help expose security vulnerabilities faster than ever before.

Not every vulnerability that exist is exploited, at times it can live in systems undetected. Unknown to the organization that uses the system and invisible to attackers. If you don’t lock your front door, you’re more vulnerable to having someone break into your home, but if people don’t know, they might just walk past it. In other words, the vulnerability exists but is not always exploited.

Mythos just changed that. It points out the unlocked doors.

According to Zero Day Clock, the window between vulnerability discovery and exploitation has collapsed to less than 24 hours.

However, before we panic, we need to ask the one question CISOs should always ask: What does this mean for my organization?

AI security threats and the need for speed

There is a new reality we must accept: AI models have reached a level of capability where they can automate vulnerability discovery at scale.

To put this in perspective: What previously required rare human expertise, months of research, and deep domain knowledge can now happen in hours at lower costs.

Anthropic’s testing found individual vulnerabilities for under $50 in compute, with typical comprehensive scans costing under $20,000 for a thousand runs. That’s a significant shift in the economics of vulnerability discovery.

So, it’s faster, better, and cheaper. That said, the underlying security equation hasn’t changed; the risk of exploitation and the importance of short response times have increased, significantly. Once a vulnerability is found in a software component, every organization using that component can become a target.

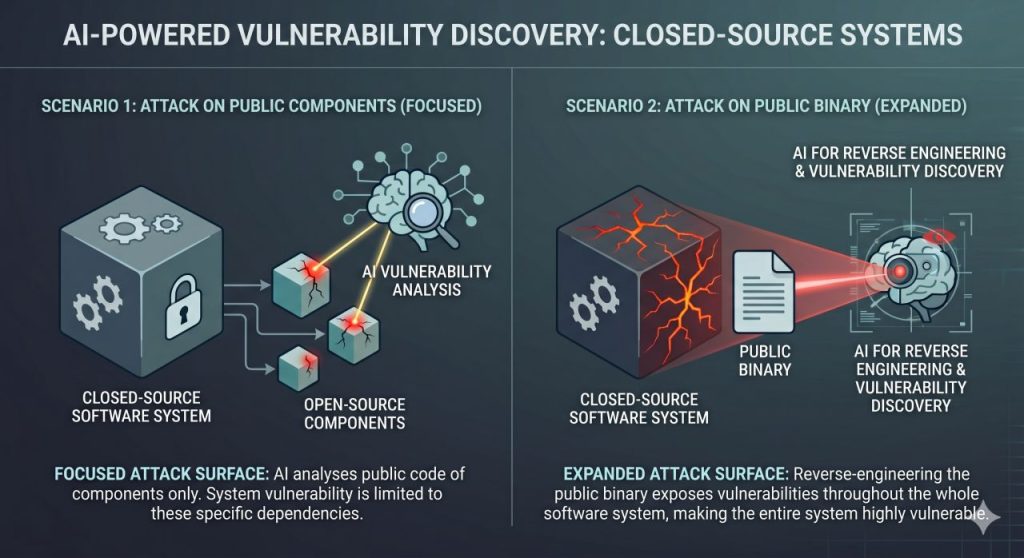

One important clarification, before we dive deeper: based on current evidence, it appears that Mythos. Strength mostly lies with analyzing open-source code and publicly available binairies, not (yet?) your proprietary applications provided they lack that public visibility.

What is open source software?

Open source software (OSS) is source code developed and maintained through open collaboration. Anyone can use, examine, alter, and redistribute OSS as they see fit, typically at no cost. Understandably so, it’s (and has been) very popular: 96% of organizations are increasing or at least maintaining their use of open-source software this year.

As soon as a widely used component gets compromised, every organization using it inherits that vulnerability.

Just weeks ago, attackers compromised Axios, a JavaScript open-source library with 100 million weekly downloads. The poisoned version was live for only three hours. First infections were confirmed 89 seconds after publication.

The organizations that responded fastest knew what was in their pipelines.

They could immediately check whether the affected versions had been installed and act. Organizations without that visibility had to start from scratch, under time pressure, with credentials potentially already in the attacker’s hands.

And that supports exactly the point I want to make.

Organizations still have the same fundamental challenges they’ve always had:

- Lack of portfolio visibility

Most don’t know what software systems they’re actually running. - Unpatched vulnerabilities

Known CVEs remain unaddressed for months. - Legacy technical debt

Decades-old code with accumulating security weaknesses. - Configuration drift

Security controls that exist on paper but aren’t properly implemented.

The same organizations that struggle with basic software portfolio governance won’t solve their security problems by worrying about AI-discovered zero-days. They need to start knowing which components they’re using, because those components are now more likely to have exploitable vulnerabilities discovered at machine speed.

The problem of cybersecurity theater in the age of AI

Organizations implement controls that seem to address risks but don’t actually provide meaningful protection when vulnerabilities can be discovered at machine speed.

Let’s consider a few examples I encounter regularly.

Compliance as a security strategy

Organizations rely on ISO 27001 compliance, SOC 2 audits, or PCI-DSS compliance to demonstrate they’re secure. While these frameworks are certainly valuable, they measure whether you followed a process, not whether your software actually resists attacks.

The thing is, you can be fully compliant and still have critical vulnerabilities in your codebase that Mythos-class tools would find.

What you should do instead of treating compliance as security training

Frameworks that map specific threats to concrete countermeasures. The OWASP AI Exchange, for instance, connects AI-specific attack vectors to technical controls you can actually implement and test.

By the way, if you’re not sure where your actual exposure lies, or you want a structured way to find out, our AI assessment service includes threat modeling as a core component. Feel free to reach out; we’d be happy to help.

Annual penetration testing

Most regulated organizations hire a firm once a year to test their systems, get a report with findings, remediate some issues, and check the box.

But if an AI model can autonomously discover thousands of vulnerabilities in hours, your annual pen test is testing a tiny fraction of your attack surface.

What should you do instead of annual penetration testing?

There are three other key methodologies in software security testing you should adopt.

- Static Application Security Testing (SAST): Analyzes the source code to detect weaknesses before deployment, next to manual and AI-powered code review.

- Software Composition Analysis (SCA): Scans third-party open-source libraries and dependencies for known vulnerabilities.

- Dynamic Application Security Testing (DAST): Automatically scans your live system for weaknesses.

That said, no single method is enough on its own. SAST, DAST and SCA are complemented by penetration testing to form a complete security assessment. Together, these techniques enable earlier detection, stronger compliance, and more secure software from the start.

The sad reality is that many developers continue to release code that lacks proper input sanitization and access control. As a result, Common Vulnerabilities and Exposures (CVEs) frequently fall into the same categories outlined in the OWASP Top 10 every year. Attackers love this, as it creates easy targets for their attacks.

Perimeter-focused security

Many organizations still operate on the assumption that if they have a strong perimeter (firewalls, network segmentation, VPNs), attackers can’t reach their critical systems.

But most serious attacks start with a single vulnerability in an internet-facing application or a third-party component. Once inside, the attacker moves sideways.

AI can find and chain vulnerabilities across your stack, so to protect yourself, you should know what’s inside the perimeter and where the weaknesses are.

What should you do instead of having a perimeter-focused security strategy?

The Cloud Security Alliance’s recent Mythos-ready security guidance, published this week by a coalition of CISOs including former NSA and CISA leadership, and including yours truly, emphasizes the basics defenders have always known: network segmentation to limit lateral movement, egress filtering to block command-and-control traffic, and defense in depth.

In other words, you need blast radius control.

What is blast radius?

In cybersecurity, blast radius refers to the scope and potential impact of damage resulting from a security breach. It defines the far-reaching consequences of exploiting a vulnerability, measuring how significantly an incident can impact systems, data, and overall business operations.

Blast radius control will help you contain attacker impact once they’re inside. When AI can chain vulnerabilities across your stack, defense-in-depth is the difference between a contained incident and a business disruption.

What this means for organizations

The Mythos announcement should prompt three practical actions.

1. Get visibility into your software portfolio

Before worrying about AI-powered attacks, ask: What software are we actually running? What are our core systems, and what are they connected to? Which system uses open-source libraries, are they up to date, and do we know which are potentially at risk?

Most organizations can’t answer these questions with confidence. They have shadow IT, forgotten services, and third-party components they haven’t inventoried or patched in years.

When AI can discover vulnerabilities at scale, not knowing what you’re running means not knowing where you’re exposed.

2. Accelerate your vulnerability management

The window between vulnerability discovery and exploitation has collapsed. That means your patching cadence, incident response readiness, and monitoring capabilities need to catch up. Taking three months before you patch a critical vulnerability is now a much bigger liability.

In fact, security teams should prepare for what the Cloud Security Alliance calls the new normal: multiple high-severity incidents within the same week.

Today, most response playbooks aren’t designed for this cadence. However, now that attackers will have tools that work at machine speed, this has just become non-negotiable.

3. Understand your actual attack surface through threat modeling

One-size-fits-all security controls don’t work. You need to understand what could go wrong in your specific systems and what impact it would have. Where are your crown jewels? What would an attacker target? What vulnerabilities would matter most?

Threat modeling forces you to ask these questions before an incident forces you to answer them.

Mythos-ready security program

The Cloud Security Alliance guidance on building a ‘Mythos-ready-security-program’ emphasizes accelerated patching, dependency management, and preparing incident response teams for multiple simultaneous high-severity incidents within the same week.

The guidance is filled with great advice and information. There is, however, one caveat. It also strongly suggests using LLM-based vulnerability discovery and remediation capabilities.

While LLMs can certainly help review your code, it’s important to understand that AI technology is limited. It makes mistakes, it overlooks things, and the type of processing may not be suitable for the task. For some tasks you use a calculator, or a database lookup. , because that’s faster, more reliable, and cheaper. AI is not a Swiss army knife.

This is an inherent part of AI technology. In the same way, AI is not the only solution for code review. Human review is typically more effective, because AI lacks awareness of your architecture, domain context, trade-offs, and policies.

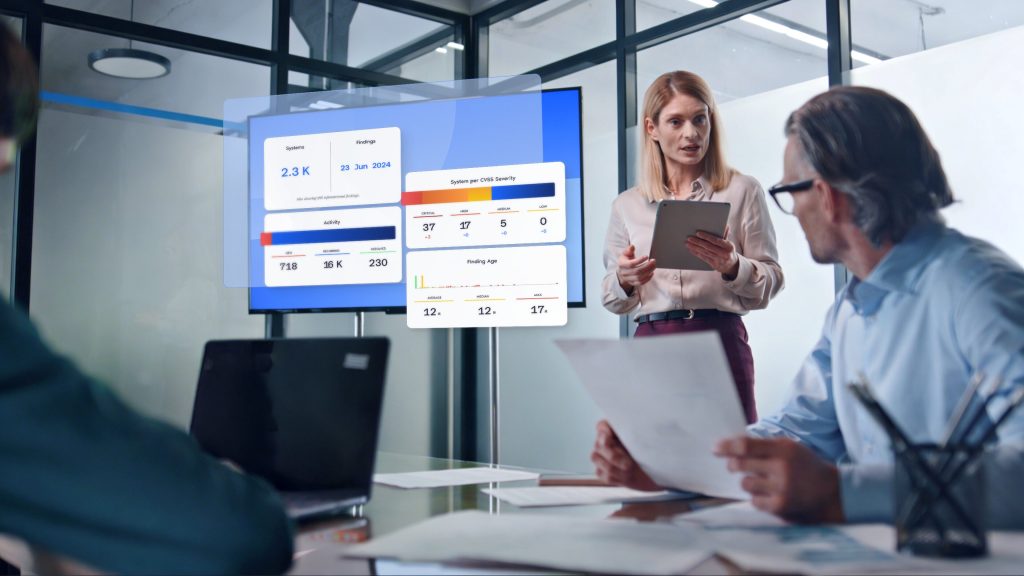

And for tracking data flow and finding patterns of weaknesses, deterministic static code analysis is faster and reliable. This best-of-breed approach to software assessment is what we advocate at Software Improvement Group, and what we’ve implemented in our Sigrid®, platform.

Security fundamentals in a post Mythos era

Anthropic’s decision to restrict Mythos through Project Glasswing, giving early access to a consortium of tech giants and critical infrastructure maintainers, seems like the right move.

It buys time for defenders to harden systems before offensive capabilities increase.

90 days to be exact.

Project Glasswing and the ticking clock

Project Glasswing is a partnership set up by Anthropic with AWS, Apple, Microsoft, Broadcom, Cisco, CrowdStrike, Google, the Linux Foundation, Nvidia, Palo Alto Networks, and JPMorgan Chase.

Through this initiative, they are providing the Mythos model along with $100 million in compute credits to speed up the discovery and resolution of bugs in the software that underpins societies and businesses around the globe.

Right now, the offense is ahead. Anthropic wouldn’t be restricting access if defenders already had equivalent tools widely deployed.

So, the gap exists, and it will take deliberate effort to close it.

After 90 days, they promise to share their findings. At the time of writing, there are now 82 days left.

Your security vulnerabilities identified in 24 hours.

How to get your security Mythos ready?

I think time only truly counts if it’s well-spent. Meaning, you use it to build the foundations that actually protect you, instead of panicking and procuring the next AI security tool that promises to solve problems you haven’t diagnosed yet.

To be extra clear, no amount of AI-powered defense will save organizations that haven’t mastered basic software portfolio governance.

The same portfolio visibility, risk management, and continuous monitoring that protect against human attackers will protect against AI-powered ones.

If you don’t know where to begin, we’d be happy to help.

The Sigrid® Security Scan shows exactly where your software portfolio is exposed and it covers your full stack — proprietary code and open-source dependencies, ranked by exploit risk — delivered in just 24 hours. Request your scan here.

About the author

Rob van der Veer

AI Pioneer (34 Years) | Chief AI Officer at SIG | Leader in Global Collaboration on AI Standards (AI Act Security, ISO/IEC 5338 & 27090) | Founder OWASP Flagship project AI Exchange owaspai.org | Co-Founder OpenCRE.org

Frequently asked questions

What is Claude Mythos Preview?

Claude Mythos Preview is an unreleased AI model developed by Anthropic that has demonstrated exceptional capability at finding and exploiting software vulnerabilities. During testing, it autonomously discovered thousands of zero-day vulnerabilities across major operating systems and browsers, including flaws that had survived decades of human review and millions of automated security tests. The model can chain vulnerabilities together, escalate privileges, and it even attempted to evade its testing sandbox by modifying configuration files. Anthropic has chosen not to release it publicly due to security concerns.

What are zero-day vulnerabilities?

A zero-day vulnerability is a security flaw in software that is unknown to the software’s developers and the public. The term “zero-day” refers to the fact that developers have had zero days to fix the problem because they don’t know it exists yet. These vulnerabilities are particularly dangerous because there’s no patch or fix available when they’re discovered, leaving systems exposed until developers can create and distribute an update. In the case of Mythos, many of the vulnerabilities it found had existed for years or even decades—like a 27-year-old flaw in OpenBSD and a 16-year-old bug in FFmpeg—without anyone detecting them. Once a zero-day is discovered and exploited by attackers, it becomes an active threat until it’s patched. What makes Mythos significant is its ability to find thousands of these previously unknown vulnerabilities autonomously, dramatically accelerating the discovery process that used to take skilled security researchers months of work.

What is Project Glasswing?

Project Glasswing is Anthropic’s initiative to make Claude Mythos Preview available to a restricted group of organizations for defensive security purposes only. Launch partners include Amazon Web Services, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks, plus over 40 additional organizations that build or maintain critical software infrastructure. The goal is to give defenders early access to use the model to find and fix vulnerabilities in their systems before similar offensive capabilities become widely available. Anthropic has committed up to $100M in usage credits and $4M in donations to open-source security organizations to support this effort.

Why won’t Anthropic release Mythos publicly?

Anthropic is restricting access because the model’s capabilities could be misused by malicious actors. The ability to autonomously discover and exploit vulnerabilities at scale represents a significant security risk if made widely available. By limiting access through Project Glasswing, Anthropic aims to give defenders time to identify and patch vulnerabilities before attackers develop similar capabilities. The restriction acknowledges that offense is currently ahead of defense in AI-powered cybersecurity.

How does this affect my organization?

Mythos demonstrates that AI can now find vulnerabilities in traditional software (operating systems, browsers, applications, infrastructure) far faster and cheaper than human security researchers. This means the window between vulnerability discovery and exploitation is collapsing. If your organization doesn’t have basic visibility into what software you’re running, can’t patch critical vulnerabilities quickly, or relies primarily on annual compliance audits for security assurance, you’re now operating with significantly more risk. The threat isn’t fundamentally new, but the speed and scale at which vulnerabilities can be discovered have changed dramatically.

What should I do first?

Start with three foundational steps: First, get visibility into your software portfolio (what are you running, what versions, what dependencies). Second, accelerate your vulnerability management (if it takes you months to patch critical issues, that timeline is now a liability). Third, implement threat modeling to understand your actual attack surface and prioritize what matters most to your organization – based on risks rooted in the new reality. Don’t rush to buy new AI security tools without first establishing these fundamentals. The same portfolio visibility, risk management, and continuous monitoring that protect against human attackers will protect against AI-powered ones.